Briefing

The foundational challenge in blockchain-secured Federated Learning is the need for a consensus mechanism that is simultaneously energy-efficient, decentralized, and strictly privacy-preserving, as traditional methods like Proof-of-Stake risk centralization and learning-based approaches expose sensitive model parameters. This research proposes the Zero-Knowledge Proof of Training (ZKPoT) consensus, a novel mechanism that utilizes zk-SNARKs to allow participants to cryptographically prove the accuracy and integrity of their locally trained models against a public dataset without disclosing the underlying model weights or private training data. The core breakthrough is decoupling consensus from resource expenditure or capital stake, instead basing it on verifiable, private computation. This new theory implies a future blockchain architecture where network security and leader selection are intrinsically tied to verifiable, utility-generating work, fundamentally unlocking truly scalable and private decentralized artificial intelligence applications.

Context

The integration of blockchain and Federated Learning was previously constrained by a critical trade-off between efficiency, decentralization, and data privacy. Conventional Proof-of-Work and Proof-of-Stake protocols are either computationally expensive or prone to centralization. A newer approach, learning-based consensus, attempts to use model training as the consensus task, yet this inherently risks exposing sensitive information through gradient or model sharing.

Prevailing privacy-enhancing techniques, such as Differential Privacy, introduce noise to safeguard data, but this often leads to a measurable degradation in model accuracy and overall utility, creating an unacceptable compromise for high-stakes collaborative AI systems. The field required a mechanism that could guarantee both the quality of a contributor’s work and the absolute confidentiality of their data.

Analysis

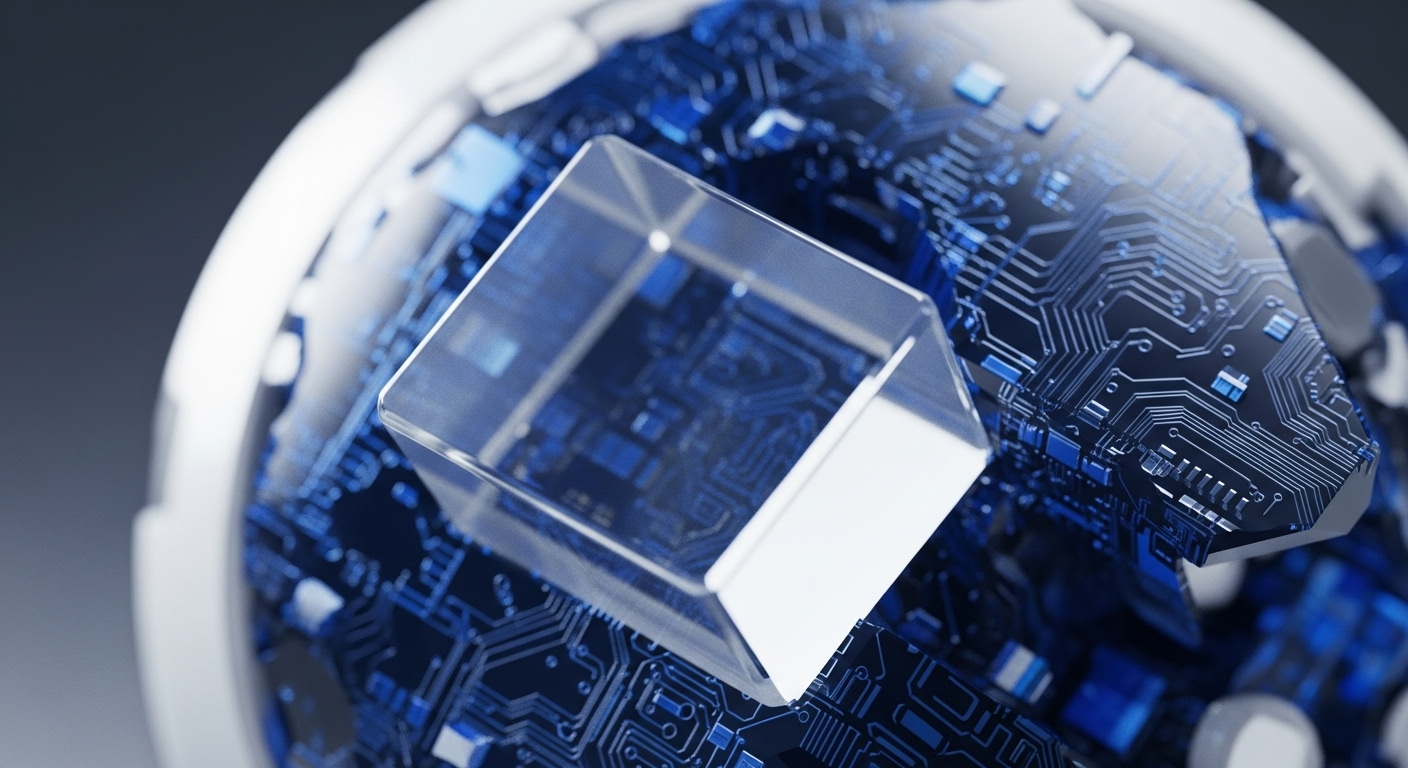

The ZKPoT mechanism introduces a new cryptographic primitive for consensus by transforming the verification of model training into a succinct mathematical proof. A client first trains their model locally on private data, then uses a zk-SNARK (Zero-Knowledge Succinct Non-Interactive Argument of Knowledge) protocol to construct a proof. This proof attests to the fact that the client correctly executed the training process and that the resulting model achieves a pre-determined level of performance (e.g. accuracy) on a public test set.

The key conceptual shift is that the blockchain verifiers do not need to execute the training, nor do they need to inspect the model’s parameters; they only need to check the validity of the succinct cryptographic proof. This process ensures the integrity of the contribution while maintaining zero-knowledge privacy over the sensitive data and model weights, fundamentally replacing trust in an external party with mathematical certainty.

Parameters

- Cryptographic Primitive → zk-SNARKs (Zero-Knowledge Succinct Non-Interactive Argument of Knowledge) are leveraged for proof generation and verification.

- Verifiable Metric → Model Accuracy (The specific performance metric that is proven to be above a threshold without revealing the model parameters).

- Security Achievement → Byzantine Fault Resilience (The system demonstrates robustness against attacks from malicious participants without compromising data privacy).

- Efficiency Gain → Reduced On-Chain Overhead (IPFS is utilized for storing models and proofs, minimizing the communication and storage costs on the blockchain ledger).

Outlook

The ZKPoT framework establishes a new paradigm for decentralized systems where consensus is provably tied to utility-generating computation, moving beyond simple resource expenditure. The immediate next steps involve optimizing the computationally intensive quantization and proof generation steps for real-world, high-dimensional machine learning models. In the next three to five years, this theory is positioned to unlock a new generation of decentralized applications, including secure medical data analysis networks, collaborative financial fraud detection systems, and privacy-preserving data marketplaces, where contributors can be compensated for the verifiable quality of their data or computation without ever revealing the underlying assets. This opens up new avenues of research into verifiable computing for all types of complex, off-chain computation.

Verdict

The Zero-Knowledge Proof of Training mechanism is a foundational theoretical advance, establishing a secure, scalable, and private path for integrating complex verifiable computation directly into the core blockchain consensus layer.